Privacy Concerns

Quantitative Findings

In the privacy section of the survey, participants answered questions about their willingness to use AI with or without sensitive information. For general tech support without sensitive information shared, 55% of the participants would prefer to work with a human, 38% would prefer a chatbot, and the remaining 7% wrote in other responses. However, if the tech support involved sensitive information like an account number, 75% of disabled and 69% of nondisabled participants would prefer human support.

A similar pattern emerged when visual description AI users were asked about using AI to read sensitive information. First, these participants indicated their willingness to read sensitive information with an AI tool that did not save or share the images. In this scenario, 55% of sighted and 60% of BLV users stated they would prefer to use AI over a human reader. However, when the AI tool would share images with a tech company, only 21% of sighted and 16% of BLV users said they would prefer the AI, with the rest preferring a human reader. Thus, participants would consider using AI for private information if the AI kept the information “on device” or deleted it immediately. Participants were concerned about the privacy of sensitive information, and many reported reluctance to use AI tools for reading or processing this information if the tool were likely to share their information with others.

All participants were asked whether they believed using AI was more or less private than working with humans. Overall, regardless of disability status, the participants thought AI was slightly less private than working with humans: 18% stated that AI is much less private than humans, and 37% stated that AI is slightly less private than humans. Another 29% stated that AI and humans are equally private. Only 16% stated that AI is somewhat or much more private than humans.

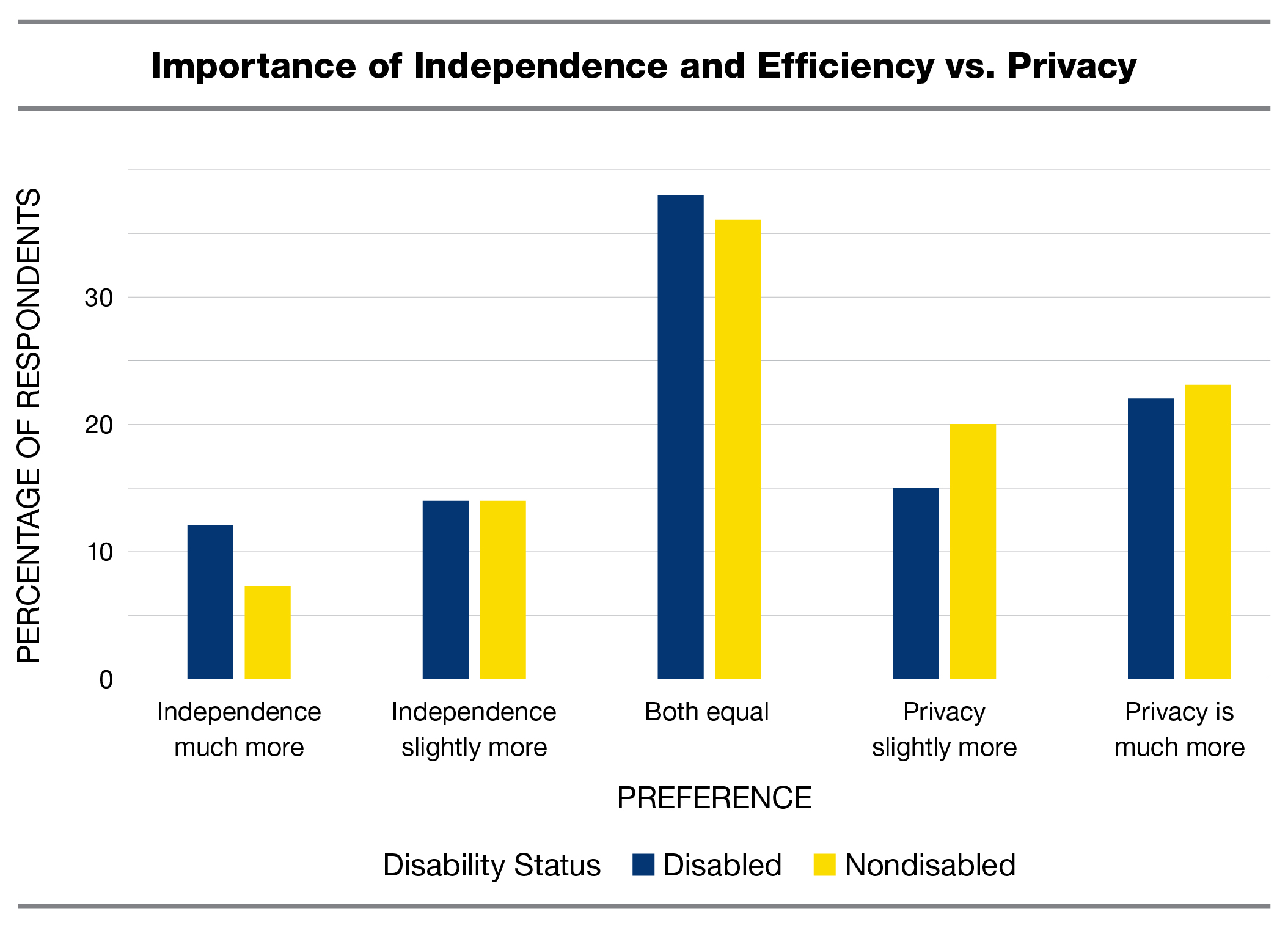

Then, all participants indicated how they prioritized trade-offs between the benefits of AI (efficiency and independence) and the cost of less privacy. As the below graph shows, many participants were more concerned about privacy costs than about AI benefits, but this pattern was slightly weaker for the disabled participants.

Two associations were found between participants’ race and their feelings about using AI versus using humans for assistance. First, when asked what tool they would prefer to use for general tech support without any sensitive information involved, 60% of White participants, but only 52% of non-White participants, preferred to work with a human. If sensitive information would be shared during the tech support chat, though, White and non-White participants preferred humans at equal rates. Secondly, when asked whether AI was more or less private than humans, 56% of White participants felt that AI was somewhat or much less private than humans, but only 49% of non-White participants did. This suggests that non-White Americans may be slightly more trusting of AI (or less trusting of humans) than White Americans, but this finding is preliminary, and more study is needed to identify the specific racial groups and cultural expectations that might drive this effect.

Qualitative Findings

Several general themes emerged in response to an open-ended question about participants’ feelings toward AI and privacy. Broadly, participants discussed concerns related to privacy and data practices, AI benefits balanced against privacy concerns, and perceptions of AI surveillance. Most participants who expressed concerns within this theme were disabled; however, a substantial number of nondisabled participants also raised similar issues. AI’s handling of personal information was the most frequently discussed aspect of privacy. Participants described concerns about what information was collected (e.g., identity, location, financial, or health information), as well as how that information was used and where it ultimately went.

Participants also raised concerns or questions about how AI companies were using their information, including uncertainty about whether data was being shared with third-party or “unknown” entities. Several participants wondered where their information was going and whether it was being used for purposes such as profiling or targeted advertising. Participants additionally raised concerns about how large language models were trained on their data, including questions about how their personal information is used in model training. For instance, participants expressed a concern that their data was being used without their knowledge or consent. One participant explained, “I think there are tiers to sharing information. For sensitive information, more needs to be done to [assure] users their data is safe. For day to day tasks, I believe that big companies are already collecting this information in other ways(...)I am not convinced that most tasks are giving information to AI companies that we are not already sharing.”

Another prominent area of concern related to data leaking or breaches. Participants expressed concrete fears regarding hacking, breaches, and data leaks, often paired with the sentiment that once personal information is released, there is no way to reverse it. As one participant explained, “Although it appears more safeguards are being put into place, hacking information is a major concern with AI.” Finally, some participants expressed general privacy concerns without elaborating in sufficient detail to support a more specific theme, or their concerns did not clearly fit into one of the above categories.